The blue robot extends its metallic arm and presses a button to signal to the lift it’s waiting. When the lift arrives and doors open, the robot glides in, selects a floor, then coasts to the back.

This might sound like Dr Who B-roll footage, but autonomous, artificially intelligent robots could soon be patrolling Victoria’s quarantine hotels if a trial by security contractor Monjon gets underway.

These robots boast “unwavering attention, perfect recall, and then superhuman sensing”, according to Dr Travis Deyle, founder and CEO of the California-based company producing them, Cobalt Robotics.

As they roam, the robots collect enormous amounts of information from 60 sensors, 360-degree cameras, microphones and two-way video. Their presence could improve monitoring in quarantine hotels. But the notion of such artificial intelligence patrolling the front line of the COVID-19 pandemic also raises urgent questions around consent, privacy and bias relating to the data collected, how it is used, and who has access.

Concerned about potential risks around the use of rapidly advancing artificial intelligence, a report by Australia’s Human Rights Commission into human rights and technology – tabled in Federal Parliament in May – called for a temporary ban on facial recognition and the creation of a national AI strategy and commissioner.

While the planned trial of robots in Victoria’s hotel quarantine was widely reported by media in February, a spokesman for COVID-19 Quarantine Victoria would not confirm for The Citizen whether they were as yet in use. The agency was “continually evaluating options to strengthen CCTV and remote monitoring at quarantine hotels” including “exploring how new technology can play a role”.

Internationally, COVID-19 has seen a dramatic uptick in the use of AI robots. The global population of Cobalt Robots – a leader in security and facilities management robots – has increased tenfold during the pandemic, says Dr Deyle.

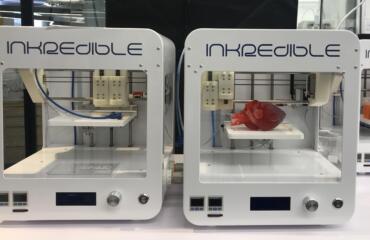

Bryan Goudsblom, CEO of Monjon, the company which proposed the robot trial in quarantine hotels, said data integrity would be critical for quarantine hotels. He expects any information gathered would be stored on servers in Australia. Image credit: Cobalt Robotics Inc.

In 2015, MIT’s Tech Review recognised Dr Deyle as one its top innovators under 35, and today he is already a veteran of AI and robotics. He earned his PhD building healthcare robots and worked in a Google[x] lab on health technologies. Then in 2016, after “talking to an old friend” who was the head of security for a large tech firm, he and co-founder Erik Schluntz, launched Cobalt.

The models coming off his company’s assembly line resemble a modern, friendlier take on a Dalek. They are cone-shaped, covered in blue fabric and move independently, gliding at a pace somewhere between gentle stroll and brisk stride. One of the robot’s unique capabilities is its ability to travel in lifts.

The robot’s AI is honed to detect “anomalies”, anything that’s not quite right. This might be an open door, a person in the corridor, overflowing rubbish or unusual sounds. Sometimes the robot can respond automatically, logging a maintenance request or call ‘000’. Other times it contacts a human, like an operator or security guard, for help.

Dr Deyle says when the robots start work at a new site, they often flag many “false positives” until they learn how to respond to different situations. In one office the robots wrongfully detected a possible intruder a number of times. But the “person” detected was in fact a life-size cardboard cut-out of Disney Princess Jasmine. Now the robots roll past Jasmine knowing she is not a threat.

Most data collected by the Cobalt Robots is stored remotely in the cloud, predominantly on Amazon Web Services servers. This means each robot is learning not just from its own experience, but from the collective experience of the whole fleet.

So, does the arrival of these AI robots foreshadow promise or disaster?

There are pros and cons to AI, as there is for any technology, says Dr Mina Henein. He’s a roboticist and researcher with the 3A Institute which investigates the broader social, ethical and regulatory context for AI systems.

On the upside, he argues, robots are more efficient and accurate at certain tasks, and can reduce problems due to fatigue and human error. Downsides include privacy risks due to the amount of data collected, and the potential for bias in how robots make decisions and interpret data.

Given the vast amounts of data AI machines are capable of scooping up, the Office of the Victorian Information Commissioner published specific guidelines in April detailing how the state’s privacy rules apply to AI. The guidelines ask public servants and government bodies to first consider whether using AI is necessary or whether other solutions exist given the potential risks related to privacy, bias and discrimination.

Bryan Goudsblom, CEO of Monjon, the company which proposed the robot trial in quarantine hotels, says data integrity would be critical for quarantine hotels, telling The Citizen he expects any information gathered would be stored on servers in Australia.

The potential for bias, according to Dr Henein, exists for all AI robots, as it does for humans.

Bias can creep in when robots are trained on information that was originally intended for a different purpose, or because of the way it interprets data. In 2018, Reuters reported Amazon stopped using an AI hiring tool after it was found to be sexist. The tool discriminated against women after it was trained on resumes of previous successful candidates, who were mainly men. Facial recognition technologies are beset with concerns about racial and gender bias.

Dr Deyle says much of the information collected by his robots can be measured and checked in the real world. This means the company can quantify bias and work to reduce it. He notes that the robots are not currently “doing things like facial recognition”.

The Cobalt Robot is not the only AI robot being used in a COVID-19 setting. A Texas research lab found hundreds of different robots were being used in pandemic-related roles, from quarantine to telemedicine to socially-distanced delivery.

Earlier this year, Australian start-up August Robotics launched ‘Diego’, a tall, white disinfectant robot that cleans rooms using blasts of high intensity ultraviolet light. In China, “Little Peanut”, a small, round robot reportedly zips along hotel corridors delivering food to quarantining travellers while shouting, “Hello everyone. Cute Little Peanut is serving food to you now. Enjoy your meal”.

Unlike Little Peanut, the Cobalt Robot doesn’t have its own voice or speak by itself. Instead, it provides a communication interface between real people – someone in a command centre, for example, speaking to someone onsite via the robot’s video and audio functions.

Dr Deyle says voice recognition and speech technology – similar to that used by a Google Home – isn’t yet accurate or fast enough for the kind of emergency or healthcare situations the robot operates in. And anyway, he adds, the “creep factor” is too high.

People don’t seem to find the robots creepy at all, rather they become “almost like the office pet” according to Dr Deyle. Some customers dress them up for Halloween or Christmas, most choose to name their robots.

“We’ve had Larry, Curly and Moe, we’ve had Salt and Pepa”, he says, while others have been named after different Pokemon.